Quality and Evolution

After an elaborate evaluation of ThingsBoard’s vision and the application’s architecture, we hereby present a review of different quality and maintainability aspects of the ThingsBoard open source system.

Software Quality Processes

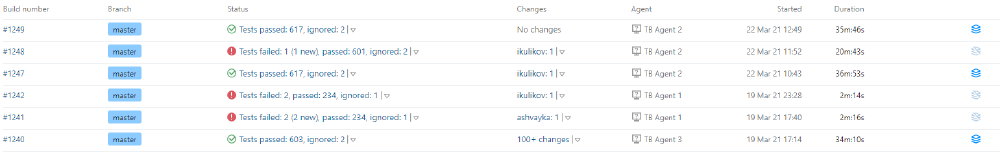

Thingsboard’s code base contains some tests for the backend code, but does not contain any useful end-to-end tests. In total, the last succeeding CI build on the master branch contains 603 tests, covering 75% of the classes, 44.6% of the methods or 46.1% of the lines. The full test suite takes 34 minutes to run, resulting in developers not being very likely to run it themselves.

Moreover, these CI/CD test-results seem to be ignored when pushing to the master branch, as multiple builds have failed on this branch on the day of writing. In fact, the current master branch build is failing as this is written.

Key Elements of C/I processes

Tests will be run upon pushing to branches/PRs. Nothing else is done in this C/I process. C/I processes in themselves as well as their results are difficult to get to. The links on the build status (checkmarks and crosses) require authentication, leaving the only way to get to the C/I without authenticating being the “TB Builds Server” badge in the readme.

Figure: Figure: Pushing a failing build to master.

Rigor of Test Processes and Role of Test Coverage

The rigor of the test processes is low, as there is no predefined way on how to determine the test cases required for the feature implemented, nor is a target minimum test percentage set. Furthermore, the role of the test coverage for this project is lost to the writer of this blog post too, as failing builds are merged to the main branch. Whenever a build fails, you would expect a change in either code or test, resulting in a succeeding build, before merging to the main branch. Some of the tests also consist of just a TODO comment.1

Past Hotspots

A factor that certainly is of influence on the quality and maintainability of code, is the rate at which this code evolves or changes. In order to gain insight into this information, we went through the ThingsBoard GitHub repository to search for the 100 most changed files in the main branch2 and the top 10 most changed files per major release branch34.

A large portion of these files are configuration files: the number one most changed file is thingsboard.yml, and more than one third of the top 100 are pom.xml files. This is not surprising, since one can imagine that changes in parts of the code may very well have consequences for the project’s dependencies/structures/other configurations. Also, a microservice architecture, such as ThingsBoard’s, generally has a lot of different configuration files and settings5. These files are disregarded in the rest of this analysis because they don’t provide much deeper insight into the actual code quality.

In releases 1.X, it is clear that ThingsBoard is a very new application. Most of the changed files have to do with APIs, connectors and deployment and not so much with business logic. ThingsBoard is establishing a base application from which they can extend later. Hotspot components for these releases include the docker files, user interface and transport, database and telemetry handlers.

From release 2.0 onwards, one can tell that ThingsBoard has grown quite a lot and gained popularity and traction in the community and among customers. Releases 2.X all have to do with scaling up. For example, consider the addition of many different locales and the move towards a microservice architecture. These releases can be characterized by structural changes and the refactoring of controller classes and files. Hotspot components are locale files for the UI, rule engine nodes and chain, the core controller and actor components.

Once the dust had settled on these upscalings, ThingsBoard moved on to do some quality control of all previous changes in releases 3.X. The main change is a major update of the UI framework, but also the database and authentication methods go through a fine-tuning and improvement process in this release. The hotspot components are the new UI components, rule engine, core controller and actor components.

Future Hotspots

As defined earlier in the report on ThingsBoard’s product vision6, ThingsBoard is planning to work on three aspects of the application: (1) improve scalability and robustness, (2) direct development towards edge gateways and (3) ease the development process by implementing Python and Javascript gateway SDKs.78

The first goal is likely to have the largest effects in the core controller component, since this component is responsible for processing requests and data manipulation. This particular component has been one of the most modified in ThingsBoard’s code base since the beginning and this is reflected in the code. There are a lot of safety checks and both functional methods as well as entire class files tend to be longer than average in ThingsBoard’s code base. The developers should be wary of exaggerating this discrepancy any further when working on these new developments.

The second goal will have an effect on the transport components. Improving/extending edge gateways means scaling up the capabilities of the ThingsBoard device connector services, allowing for more advanced control features or more diverse device supports 9. ThingsBoard wants to add support for GPIO10 and FTP11. The current architecture already allows easy integration of such horizontal scalings. ThingsBoard also wants to add RPC calls to connectors through the gateway. This requires some more work, so one can expect some significant modification to the thingsboard.server.actors.rpc package in the future.

The third goal will be an extension into thus far unexplored territory. It could be expected that especially for the points where ThingsBoard interacts with external parties, they will try to allow for a broader range of SDK integrations to simplify customization by customers. Therefore extra attention should go into the readability and maintainability of the rule engine component and, again, the transport component.

Code quality

For an evaluation of the code quality, we focus on the most changed component in the near past and future. This is core component, which has its code in the thingsboard.server.controller12 package. The table below shows an evaluation of different quality measures for this piece of code.

| Measure | Controller | Suggestions |

|---|---|---|

| Readability | (+) Standardized and clear naming conventions are used throughout. (+) The code seems organized and well isolated; there is no duplication. (-) The code has no comments and no JavaDoc. | - Add JavaDoc13 to increase readability and support automated documentation. |

| Singularity | (+) By far the most methods have a single responsibility. (-) The larger classes could have been split up in more (sub)components. | - Construct guidelines on maximum file sizes. |

| Complexity | (+) Many methods are short, simple and have a cyclomatic complexity14 <4. (-) There are a few longer methods with a cyclomatic complexity > 8 | - Use (automated) code checkers to warn against complex methods. |

| Length | (+) Most methods are <15 lines (-) Some classes have 60 lines, others 450 lines. (-) There are still a few methods with >40 lines. | - Use guidelines to enforce consistency. - Construct guidelines on maximum complexity/line number for methods. |

| Abstraction | (+) All 17 controllers in the package extend from the same abstract BaseController class. | |

| Tests | (+) All controllers have a corresponding test suite with at least 1 test. (-) Some classes are tested thoroughly, others hardly. (-) The test code itself is often lengthy and complex. | - Enforce a minimum test coverage. - Use reviews to verify test presence and quality. |

Quality culture

To get insight into the quality culture at ThingsBoard, we examined a number of issues/pull requests for big releases, such as v1.2, v2.0, 2.4, 3.04, and for a number of active (open or closed) issues15. It immediately stands out that there is very little online discussion going on in the large merge requests. None of the commits that were examined, which can be hundreds per merge request, show evidence of reviews or discussions (see the screenshot below).

Figure: Figure: Evidence of the lack of discussion in large MRs.

Perhaps reviewing and discussion is done in real life by the core developers, but it would be better to then leave a summary or overview of discussion points. As an outsider, it is difficult to follow the development process and reason about adjustments or fixes.

Only issues that are made by third parties come with a lot of discussion, but this is often because the external person has a question and not so much to discuss specific code (quality). Also, such issues are often not very relevant due to the small size of the contribution that they ask for.

It also appears that, while test folders are present and populated in most project sub-directories, these are not being maintained in every update. For example, in release (MR) 1.2, 196 files were changed, of which 57 were java source code, while only 5 test files were modified in this release. The same goes for other large merge requests, such as for version 2.0 (168 java class files changed, only 23 test files) and 3.0 (61 java class files changed, 10 test files changed). Since there are still some tests being developed, it seems a logical conclusion that ThingsBoard has decided to prioritize testing for certain components only. These appear to be the controller, transport and rule components. Another thing that stands out is that whenever new (sub)components are being developed, these come accompanied with a bunch of tests. However, in commits for updating existing files, the test suite does not often get updated as well, so maintaining tests and build success does not appear to be a high priority.

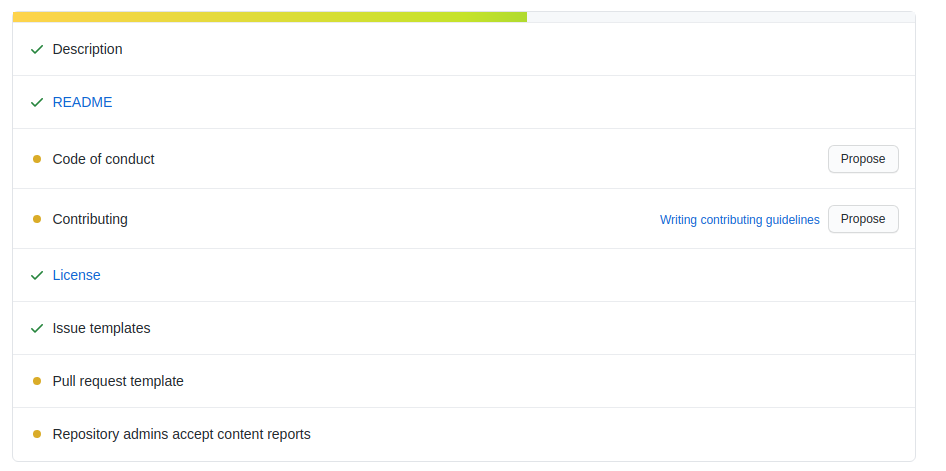

As a final remark, ThingsBoard would probably benefit from adding a CONTRIBUTING.md file on their Git. From analyzing the pull requests, it became clear that outside/third party contributors don’t add tests to their contributions, for example. This is not unexpected, because ThingsBoard does not provide community guidelines on this. In general, there are a number of additional steps to take for ThingsBoard to become a more evolved open source repository, as can be seen in the GitHub screenshot below.

Figure: Figure: GitHub’s review of ThingsBoard’s community profile.

Technical debt

As mentioned in the previous sections, the technical debt is rather high for this project, as is usual with larger, older projects. One example can be found in the test files, these are not often updated. This lack of updating / adding to the tests results in outdated tests and CI builds that provide a false sense of security. Most methods in the code lack documentation, with only a few methods containing documentation. This makes it difficult for new developers to add to the project.

| Technical Debt | Remark |

|---|---|

| Testing | Tests are outdated and are not consistently added together with new features. Some tests are empty methods containing “TODO” comments. 1 |

| Documentation | Most methods in the documentation do not contain any documentation. Only a few methods include sparse documentation. |

| Code Smells | Code contains “TODO” statements, these should be put in an issue tracker. |

Conclusion

It is clear that the developers of ThingsBoard hold themselves intuitively to high code quality standards. Nevertheless, there is still room for improvement in the quality control process of ThingsBoard, both in automated checks (esp. CI/CD) and manual instructions/guidelines.

-

https://github.com/thingsboard/thingsboard/blob/d31358b2ea24356223f064996714b602504293c7/dao/src/test/java/org/thingsboard/server/dao/sql/asset/JpaAssetDaoTest.java#L166-L174 ↩︎

-

git log –pretty=format: –name-only | sed ‘/^\s*$/d’ | sort | uniq -c | sort -rg | head -100 ↩︎

-

git log –pretty=format: –name-only | sed ‘/^\s*$/d’ | sort | uniq -c | sort -rg | grep -v ‘pom.xml|.json|.yml’ | head -10 ↩︎

-

https://www.atlassian.com/continuous-delivery/principles/configuration-management ↩︎

-

https://2021.desosa.nl/projects/thingsboard/posts/1.-product-vision/ ↩︎

-

https://thingsboard.io/docs/iot-gateway/what-is-iot-gateway/ ↩︎

-

https://en.wikipedia.org/wiki/General-purpose_input/output ↩︎

-

https://github.com/thingsboard/thingsboard/tree/d31358b2ea24356223f064996714b602504293c7/application/src/main/java/org/thingsboard/server/controller ↩︎

-

https://docs.oracle.com/javase/8/docs/technotes/tools/windows/javadoc.html ↩︎