The first ever release of XWiki was in 2003 1, making this the year that XWiki turns 18. In the time that XWiki has existed, technology has changed enormously and for any software to keep up with these changes, a lot of active development must be done. In order for an application to be functioning despite these changes, keeping its code quality of a high standard is critical. In this blog post we will talk about different software quality processes, continuous integration, test processes, general code quality of hotspots, the quality culture of XWiki and the technical dept gathered. We will start with discussing XWiki’s general software quality processes.

Software quality processes

One of the software quality processes of XWiki is performed by the committers of XWiki. When contributors of XWiki want to make a change or addition to the source repositories, first a pull request (PR) has to be made. Only when the committers reviewing this PR are satisfied with the quality and relevance of the pull request, it gets merged 2. This way, all changes to the source code are manually reviewed for quality.

Another quality process of XWiki is executed by the continuous integration (CI). This quality process is not only used for pull requests, but is ran any time code is committed. The full functionality of the CI will be discussed next chapter, but what is important for now is the quality profile. This profile is defined in the pom.xml file of the XWiki Commons 3 and uses the Jacoco-Maven-plugin to check the test coverage of the entire project. The expected coverage must be defined separately for every module, since the percentage that is achievable can differ. They do note that good values are above 70%. If the modules do not have the test coverage that is defined for them, the build fails. This is another way that the quality of the contributions are checked.

Elements of continuous integration process

XWiki currently makes use of Jenkins 4 as their CI tool. This choice of CI tool is motivated by a large comparison that can be seen here. One of the requirements for the CI tool that XWiki uses, is that the tool must be able to use a custom docker build image. Using Jenkins, XWiki can use their own Jenkins Agent Docker image to spawn Jenkings Agents for the CI. This image is then automatically built by Dockerhub.

In the repositories of the XWiki application, Jenkinsfiles can be found. In these files, the different jobs that Jenkins has to do are defined 5. These files make use of a shared global library that is also developed by XWiki. In such a Jenkins file (for example the one in XWiki Platform) it is decided what kind of build is going to be done. These builds can differ, as for example the “mvn deploy” command is ran for “master” and “stable-” branches and only “mvn install” for the rest. Also, it can be decided whether the quality profile that we talked about last chapter is going to be ran or not. Additionally, in this file is a scheduler defined, which triggers one Docker-based functional test every day at 10PM and another Docker-based functional test every week on Saturday.

The progress and results of the CI of the different XWiki repositories can be seen here.

Test processes and test coverage

In many development projects, testing is regarded at least as important as developing itself: rather less functionality that works correctly than much functionality with equally many bugs. That this approach is also taken in the XWiki project, can quickly be seen from both the documentation and the development history.

First of all, a testing strategy is provided for contributors. This strategy states that committed code must be accompanied by automated tests, testing the functionality that is created or altered by the code. These tests are executed by the CI at every commit. There are automated checks that make sure that added code does not reduce overall test coverage of the module that the new code has impact on. Furthermore, developers should frequently run tests locally. The current overall coverage of the core of XWiki is 68.65% 6 and measured using a combination of statement, branch and method coverage 7.

Because XWiki is a large and diverse project, there are naturally many types of tests that can be performed. To guide developers in determining what type of tests they need to perform, the XWiki community has therefore created a guideline for this. It states every feature requires one functional test. However, as these types of tests are costly, the number of functional tests should be limited. When more tests are required or when several different forms of similar use cases need to be tested, unit tests and integration tests are recommended. For unit testing in Java, JUnit5 is used. Jasmine is used for JavaScript-based unit testing.

Other types of testing that are performed regularly are: performance test, responsiveness tests (to verify the responsiveness of the XWiki UI on mobile devices for example), accessibility tests and HTML5 validity tests. The last two types are performed at every commit to the project. Lastly, a wide variety of manual tests are performed from time to time by specifically assigned contributors.

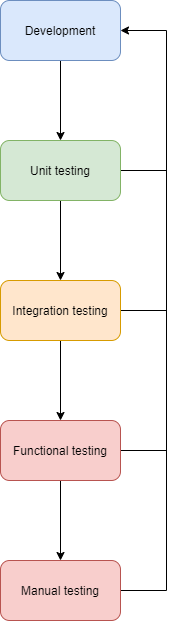

An overview of the testing strategy is given in the figure below.

Figure: An overview of a possible development flow

In order to improve the structure of the project, testing code is placed within the modules of the corresponding functionality and the concept of mocking is extensively used to remove dependencies on extensions during testing. The frameworks used for this are Mockito and WireMock

How the testing strategy is applied in practice will be illustrated later in this blog.

Code Quality and hotspots

To keep the code of XWiki readable and maintainable, XWiki has developed standard code styles for the different languages used in the project. The most important one of these is the Java Code Style, but XML code, HTML and CSS code, and XWiki XML Files also have a defined code-style.

To ensure a specific coding style for Java code, Checkstyle is used. This is a development tool that helps programmers write Java code that adheres to a coding standard 8. To enforce this, Maven builds will automatically fail on violation, making it impossible to ignore the code style. Checkstyle enforces the use of indentation, naming-conventions, small code practices and comments placed at methods. These comments must explain the functionality of the methods, and will be used for generating Javadoc documentation. To make sure the comments are correct and understandable, they are also checked by other developers when submitting a pull request. Beside comments, also the naming of classes, methods, and variables and small code practices like ToString implementations are defined and checked when submitting code. This ensures the Java code to be maintainable and understandable for other developers.

To get a better grasp of the use of these code styles, we will look at some of the programming hotspots of XWiki. Currently no big features are being added to XWiki and most changes are small and often for maintenance purposes only. This, in combination with the lack of a concrete plan for future releases of XWiki, makes it difficult to predict future hotspots. As a result, we will only look for programming hotspots based on changes made in the previous year.

XWiki is divided into three different parts: XWiki Platform, XWiki Commons and XWiki Rendering. XWiki Platform is the biggest one of the three and offers a generic wiki platform on top of which the runtime services for XWiki-applications are built 9. It makes use of XWiki Commons as a set of libraries common to other top level wiki projects 10 and XWiki Rendering, which is the rendering system that converts textual input to different supported syntaxes 11. All three of these projects are held to the same code quality standards. Still, because XWiki Platform is the most active one of the three, more discussion about code quality can be found there.

When going through the recent changes of the XWiki Platform, it is difficult to indicate a few active coding hotspots. While large parts of the project are untouched, the parts that are actively changed, seem to be randomly divided throughout the project. This could be explained by the lack of new features coming to the project and the fact that most recent changes consist of bug fixes and library upgrades 12. Still, XWiki states as general goal for all releases to have more tests, better Javadoc, more documentation and code clean-ups/refactorings 13. So, while it is difficult to predict where code quality will be improved in the future, the fact that it will, is promised.

Quality culture

To show the quality culture of the XWiki community, we compiled a list of issues and pull request of architecturally significant value, defined as issues and pull request related to important components of XWiki. We then randomly selected ten issues and ten pull requests in order to obtain a proper sample and analysed them. The investigated PRs and issues are summarized in the table below.

| Issues | Pull Requests |

|---|---|

| XWIKI-18429 | XWIKI-15833 |

| XWIKI-17508 | XWIKI-17524 |

| XWIKI-17509 | XWIKI-8120/7076 |

| XWIKI-16370 | XWIKI-17318 |

| XWIKI-12639 | XWIKI-6073 |

| XWIKI-30 | XWIKI-17895 |

| XWIKI-94 | XWIKI-12639 |

| XWIKI-17069 | XWIKI-10252 |

| XWIKI-ANDAUTH-51 | XWIKI-17389 |

| XWIKI-188449 | XCOMMONS-602 |

To get a better overview, we selected interesting issues and PRs to illustrate our findings. From the issues, several things can be learned. First, it can be seen that discussions do not always take place within issues itself. Instead, important issues often have associated forum topics on the XWiki forum. An example of this is XWIKI-17069. While the forum can be a solution for active community discussions, potentially even better than the issue board itself, it also makes it harder for new contributors to find and contribute to discussions. This is even increased by the fact that proposals for new functionalities are explained on the design website of XWiki, instead of in their corresponding issues. An example is issue ANDAUTH-51. The use of the issue board, the forum and the design page cause that there are three different sources of information that can be related to single issues.

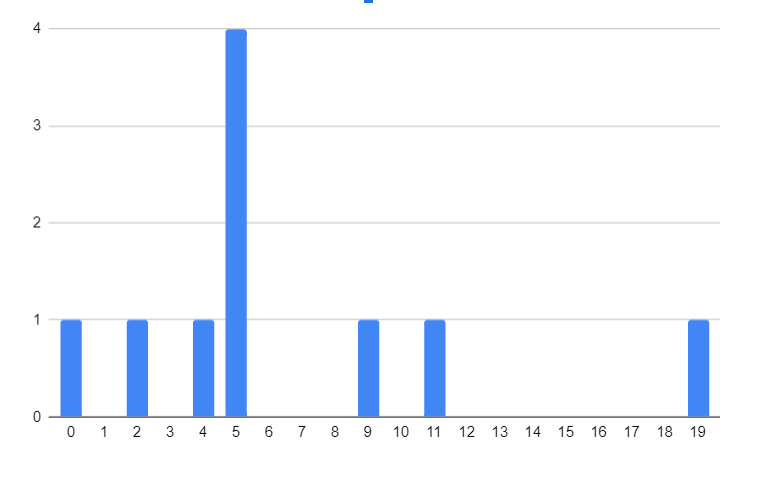

The figure below shows the number of comments in discussions related to the investigated issues. These comments can either be placed within the specific issue itself or on a related forum discussion.

Figure: An overview of number of comments on investigated issues

In this figure, it can be seen that virtually every significant issue is accompanied by a discussion, which have lengths ranging from two to nineteen comments for issues. The average amount of comments calculated for PRs is 64.6. Different types of discussions can be seen: some discussions are focused around developers giving feedback to the ideas and code quality, other discussions take place between developers that do not agree on functionality or approaches taken by the creators of issues and PRs. The important thing is that virtually every investigated discussion is meaningful and discusses important aspects of issues or PRs. However, it should also be noted that some discussions only contain comments written by the creator of the corresponding issue. In these cases, the discussion space is used to provide updates about the issue.

The amounts and contents of the discussions therefore show that the quality of the XWiki code is important to the community.

A similar thing can be said about testing. Earlier we namely saw that XWiki developers are theoretically required to create automated tests for every significant commit. The PRs show that this approach is strictly enforced in practice and there were no cases of untested code being merged via pull requests.

Technical debt

Backward compatibility is a huge focus and a core value of the XWiki community. Users have been developing applications inside of the XWiki platform using APIs, some of which can exist for years. Thanks to the development practices to ensure that APIs don’t break, 10 year old extensions still work on the newest versions of XWiki14. While this is a great attribute on the user-side of the project, the developer-side bears the great responsibility of ensuring this15. When a big change or update is made in the XWiki architecture, a lot of effort is put in preserving backward compatibility, even if this means some solutions are more complex than they could be. To limit the amount of technical debt in trying to comply to all old API methods, a deprecating system has been set up. To deprecate code it first has to be tagged with a @deprecate annotation, and when the core code does not use any of the deprecated APIs anymore, it can be moved to a legacy module. This way, old code can be cleanly removed to keep working code clean, while still being available for users16.

An example of technical debt that has been present since 2006/2007 is the xwiki-platform-oldcore module, where before 2006 the whole of XWiki was located. The idea was to extract smaller modules from this monolithic module and write a new model until it will completely disappear. Well that clearly has not happened yet, and while the idea was to not add any new stuff to the oldcore (mentioning technical debt as a reason17), the GitHub is full of commits updating this module. There are some other coding practices in the community that show more signs of technical debt, and while the developers seem very conscious of technical debt, sometimes solutions to issues adding to technical debt are chosen as the best current fix (example issue).

Hopefully this blog post provides some insights into the quality and evolution of XWiki.

-

https://www.xwiki.org/xwiki/bin/view/XWikiOrgCode/Heartbeat ↩︎

-

https://dev.xwiki.org/xwiki/bin/view/Community/Committership ↩︎

-

https://github.com/xwiki/xwiki-commons/blob/master/pom.xml#LC2772 ↩︎

-

https://massol.myxwiki.org/xwiki/bin/view/Blog/ScheduledJenkinsfile ↩︎

-

http://maven.xwiki.org/site/clover/20210208/XWikiReport-20171222-1835-20210208-0128.html ↩︎

-

https://openclover.org/doc/manual/4.2.0/general--about-code-coverage.html ↩︎

-

https://github.com/xwiki/xwiki-platform/blob/master/README.md ↩︎

-

https://github.com/xwiki/xwiki-commons/blob/master/README.md ↩︎

-

https://github.com/xwiki/xwiki-rendering/blob/master/README.md ↩︎

-

Bogart, Christopher, et al. (2016) “How to break an API: cost negotiation and community values in three software ecosystems.” Proceedings of the 2016 24th ACM SIGSOFT International Symposium on Foundations of Software Engineering ↩︎

-

https://dev.xwiki.org/xwiki/bin/view/Community/DevelopmentPractices#HBackwardCompatibility ↩︎

-

https://dev.xwiki.org/xwiki/bin/view/Community/DevelopmentPractices#HMigratingawayfromtheOldCore ↩︎